OpenClaw Under the Hood: Building a Deterministic Trading Agents with MCP server

OpenClaw is best understood as a self-hosted gateway plus agent runtime system, not as a framework where every user-facing agent is a Python class or long-running daemon. The Gateway receives messages from channels, resolves them to an agent and session, runs one serialized agent turn, records transcript state, executes tools, and delivers the answer back through the originating channel. The official agent-loop docs summarize the core path as “intake → context assembly → model inference → tool execution → streaming replies → persistence,” with one serialized run per session.

That matters when building something like a deterministic financial, like our DBOT - analyst that must compute DCFs, query financial facts, rank companies, and never hallucinate numbers. In OpenClaw, the right design is usually:

Agent = prompt/config/session/runtime scope

Skill = markdown guidance for when/how to act

Tool = typed callable capability

MCP server = cross-language tool server, ideal for Python/pandas

Plugin = native TypeScript OpenClaw extension

Harness/runtime = replacement model loop, not business logic

The mistake is to say, “I’ll implement the agent as a Python script.” That is not how normal OpenClaw agents work. You implement deterministic logic as tools, usually through MCP for Python/data work, and then give the agent markdown instructions that force it to use those tools.

Anatomy of a normal OpenClaw agent

A normal OpenClaw agent is not a daemon, service, or Python class. It is a configured scope: agentId, workspace, optional model/runtime overrides, tool permissions, skills, auth profile, and session store.

OpenClaw’s multi-agent docs say the Gateway can host one or many agents side by side. Each agent has its own workspace,

agentDir, and sessions; default single-agent mode uses agentId: main, workspace ~/.openclaw/workspace, and sessions under ~/.openclaw/agents/main/sessions. A minimal financial analyst agent might look like this:

{

agents: {

defaults: {

model: "anthropic/claude-sonnet-4-6"

},

list: [

{

id: "dbot",

workspace: "~/.openclaw/workspace-dbot",

skills: ["finance-analysis"],

tools: {

allow: [

"finance_dcf__compute_dcf",

"finance_dcf__query_facts",

"finance_dcf__rank_metrics"

]

}

}

]

},

bindings: [

{

agentId: "dbot",

match: { channel: "telegram", accountId: "finance" }

}

]

}

The workspace then contains markdown files:

~/.openclaw/workspace-dbot/

IDENTITY.md

SOUL.md

AGENTS.md

USER.md

TOOLS.md

skills/

finance-analysis/

SKILL.md

These files are not executable code. They are context. The runtime loads eligible skills and workspace instructions, combines them with the transcript and tool catalog, then calls the model. OpenClaw’s tools docs make the distinction explicit: tools are typed functions the agent can invoke, while skills are markdown files injected into the system prompt to teach the agent when and how to use tools. A DBOT skill should therefore say things like:

--- name: finance-analysis description: Use deterministic finance tools for valuation, facts, ranking, and audit trails. --- Rules: - Never compute DCF, WACC, NPV, IRR, or ranking metrics manually. - Use `finance_dcf__compute_dcf` for DCF calculations. - Use `finance_dcf__query_facts` for company fundamentals. - If a tool returns `no_data`, report missing data. - Preserve assumptions and audit IDs from tool results.

That skill steers the model. It does not itself calculate the DCF.

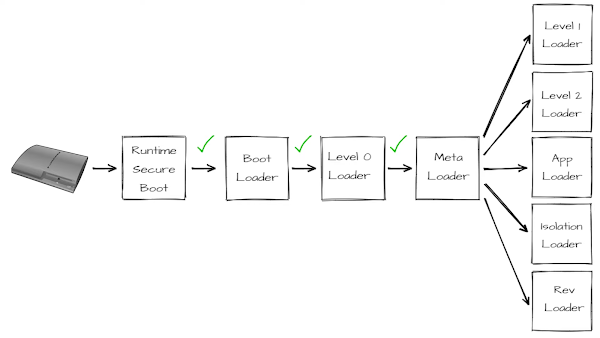

How OpenClaw receives and routes messages

At runtime, OpenClaw acts as the control plane.

A user message first enters through a channel adapter, CLI command, API call, or WebSocket client. The channel adapter normalizes the inbound event into a Gateway request.

The Gateway then applies routing and bindings. This is where OpenClaw decides which configured agent should receive the message. For example, a Telegram account named finance might route to agentId: "dbot", while a WhatsApp personal account routes to agentId: "home".

After the agent is resolved, OpenClaw resolves the session key. The session key identifies the conversation thread: the same Telegram chat, CLI session, API session, or sub-agent session should continue using the same transcript.

Before the runtime sees the message, OpenClaw queues it twice conceptually:

per-session queue -> prevents two turns in the same conversation from running at once global lane queue -> caps total concurrency across categories like main, cron, or subagent

Only then does OpenClaw assemble the actual model context: system prompt, workspace markdown, eligible skills, previous transcript messages, current user input, tool catalog, model/runtime config, and session metadata. That assembled context enters the selected model/runtime loop.

Inside the loop, the model produces either ordinary assistant text or tool calls. If it calls a tool, OpenClaw executes that tool according to the effective tool policy, captures the result, appends it back into the runtime context, and resumes the loop.

This can repeat multiple times:

model -> tool call -> tool result -> model -> tool call -> tool result -> model

When the model finally returns assistant output instead of another tool call, OpenClaw writes the completed turn to the transcript store. If the run is deliverable, the Gateway sends the answer back through the original channel or returns it to the CLI/API caller.

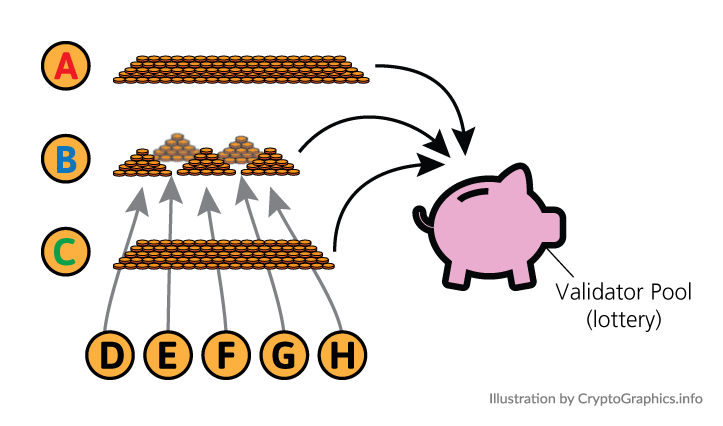

Parent agents, sub-agents, and message flow

Sub-agents are best understood as child OpenClaw sessions created by another agent, not as separate services or automatic containers. The parent agent does not directly “call a worker process.” Instead, it asks the Gateway to create and run another agent session in the background.

A parent/sub-agent flow looks like this:

The actors are:

- User: The human or external client asking for work.

- Gateway: The OpenClaw control plane. It owns routing, sessions, queues, policy checks,

transcripts, task tracking, and delivery.

- Parent agent session: The main agent conversation that received the original user request.It plans the work, decides whether to spawn sub-agents, and synthesizes the final answer.

-Subagent lane:A named background execution lane. It queues child agent runs and limits how many sub-agent jobs can run concurrently.

- Child sub-agent session: A separate OpenClaw session created for a specific task. It has its own session key, transcript, context, metadata, tool policy, and final output.

- Deterministic tools: Typed tools such as MCP tools or native plugin tools. These perform facts retrieval, DCF math, ranking, validation, or audit logging.

First, the user sends a request:

"Analyze AAPL and MSFT. Compare fundamentals, run a DCF, and tell me which is more attractive."

The Gateway routes that request to the configured parent agent, for example agentId: "dbot". The parent session starts a normal model/runtime turn. It reads the user request, its workspace instructions, its skills, the available tool catalog, and any previous transcript context.

The parent then decides that the job has multiple separable parts. For example:

1. Retrieve canonical financial facts.

2. Compute DCF valuation.

3. Review assumptions and summarize.

Instead of doing everything in one long turn, the parent can call sessions_spawn to create a child run:

{

"agentId": "facts-agent",

"label": "AAPL/MSFT facts",

"task": "Retrieve revenue, FCF, debt, cash, shares outstanding, margins, and growth data for AAPL and MSFT. Use only deterministic finance tools. Return structured facts and missing fields.",

"runTimeoutSeconds": 300

}

This call goes back to the Gateway. The Gateway checks whether the parent is allowed to spawn that target agent. If policy allows it, OpenClaw creates a new child session key, conceptually like:

agent:facts-agent:subagent:<uuid>

That child session is then queued on the subagent lane. The lane is not a separate product like Kafka or Celery. It is an OpenClaw execution lane used to control concurrency for background agent work.

When the child run starts, the child agent receives the task as its input. It does not magically share the parent’s internal thoughts or entire transcript by default. It gets a task message, its own agent configuration, its own workspace context, its own allowed tools, and its own transcript.

The child might then call deterministic tools:

finance_dcf__query_facts({

tickers: ["AAPL", "MSFT"],

fields: ["revenue", "free_cash_flow", "net_debt", "shares_outstanding"],

period: "ttm"

})

When the child finishes, the result is recorded in its own transcript and task state. Then OpenClaw announces the completion back to the requester, meaning the parent session receives the child’s final result as an internal follow-up/update. The parent can then spawn another child, for example:

{

"agentId": "valuation-agent",

"label": "AAPL/MSFT DCF",

"task": "Using the facts returned by facts-agent, compute a DCF for AAPL and MSFT. Use finance_dcf__compute_dcf only. Return assumptions, fair value per share, sensitivity table, warnings, and audit IDs.",

"runTimeoutSeconds": 300

}

The valuation child runs the same way: separate session, queued background execution, own transcript, deterministic tool calls, final result. Finally, the parent receives all child outputs and synthesizes the user-facing answer:

- facts-agent returned canonical financial facts - valuation-agent returned DCF results and audit IDs - parent agent compares the results - parent writes the final explanation to the user

The important point is that the parent is the orchestrator, not the executor of every detail. The child sessions do delegated work, and deterministic tools do the actual hard computation or retrieval. A compact flow is:

User request ↓ Parent agent plans ↓ Parent spawns child session ↓ Gateway creates child session and queues it ↓ Child receives task as its own input ↓ Child calls allowed tools ↓ Child writes final result ↓ Gateway announces result to parent ↓ Parent synthesizes final answer ↓ Gateway delivers answer to user

There are three isolation layers to keep separate:

Session isolation The child has a separate session key and transcript. Context isolation The child does not automatically inherit the parent transcript unless forked context is used. Tool/policy isolation The child may have a narrower tool set than the parent. Leaf sub-agents commonly lose orchestration/session tools, so they can do assigned work but cannot freely spawn or message other sessions.

This is not the same as OS-level isolation. By default, sub-agents are logical OpenClaw sessions running through the Gateway/runtime system. Stronger isolation requires sandboxing or external process/container controls.

ACP sessions versus normal sub-agents

ACP sessions are easy to confuse with native sub-agents. They are different. OpenClaw’s ACP docs say ACP is for running external coding harnesses such as Claude Code, Codex, Cursor, Gemini CLI, OpenCode, and others through an ACP backend plugin. The docs explicitly contrast ACP sessions with sub-agent runs: ACP uses an external harness runtime, while sub-agents use OpenClaw’s native sub-agent runtime; their session keys also differ, e.g. agent:<agentId>:acp:<uuid> versus agent:<agentId>:subagent:<uuid>.

Use ACP when the thing doing the work is an external runtime like Codex or Claude Code. Use native sub-agents when you want OpenClaw-managed child conversations.

Neither is the primary answer for deterministic DCF logic. ACP and sub-agents are orchestration layers. DCF should be a tool.

Three ways to add deterministic logic

There are three real options.

Option 1: Skill only — not enough

A skill is markdown. It can tell the agent:

- Always use compute_dcf for DCF calculations.

- Never compute valuation numbers by hand.

But it cannot enforce DCF math unless a tool exists. OpenClaw’s docs say skills teach the agent how to use tools and are loaded from workspace/shared/plugin locations. Use skills for operating policy, not deterministic computation.

Option 2: MCP server — best default for Python/pandas/data

MCP is usually the right answer for DBOT.

MCP is not a tool. MCP is a protocol. An MCP server exposes tools. OpenClaw stores outbound MCP server definitions under mcp.servers; openclaw mcp set writes config only and does not connect to or validate the target server at registration time.

For finance:

finance-mcp/

pyproject.toml

src/finance_mcp/

server.py

dcf.py

facts.py

metrics.py

schemas.py

audit.py

Register:

openclaw mcp set finance_dcf '{

"command": "uv",

"args": [

"run",

"--project",

"/home/me/finance-mcp",

"python",

"-m",

"finance_mcp.server"

],

"env": {

"FACTS_DB": "/data/fundamentals.duckdb"

}

}'

Then expose typed tools such as:

finance_dcf__query_facts finance_dcf__compute_dcf finance_dcf__compute_wacc finance_dcf__rank_metrics finance_dcf__audit_replay

Option 3: OpenClaw plugin — heavier, native TypeScript

A plugin is appropriate when you need native OpenClaw integration: channels, hooks, model providers, agent harnesses, native tools, skills, or Gateway lifecycle behavior. OpenClaw’s plugin docs describe plugins as packages that can register channels, model providers, tools, skills, speech, media, web search/fetch, and more. Use a TypeScript plugin if you need to hook into OpenClaw itself. Do not use a plugin just because you want pandas.

Why not just register a Python script as a tool?

You can run Python through an exec tool, but that is not the same as exposing a typed tool.

Path A: shell script through exec:

These issues arise when using a raw Python script via shell execution instead of a structured tool interface:

No schema — the function interface is not formally defined, so the model has to infer argument structure and expected outputs from prose.

Shell quoting — arguments must be encoded into a command string, which introduces fragile quoting/escaping errors, especially with nested JSON.

Parsing output — results come back as plain stdout text, requiring the model to interpret or extract structured data unreliably.

Cold start — every invocation launches a new Python process, adding startup latency on each call.

Heavy imports — libraries like pandas are reloaded every time, significantly increasing execution time.

Poor discovery — the capability is not visible as a named tool in the tool catalog, so the model cannot reliably “see” or select it.

Ambiguous output — responses are unstructured text, which can lead to inconsistent interpretation or hallucinated structure.

Path B: Python through MCP:

The advantage is not merely environment isolation. The major advantage is protocol and schema. The model sees a real typed function, sends JSON arguments, and receives structured JSON.Is MCP an HTTP server?

Sometimes, not always.

OpenClaw’s MCP docs list several transport shapes.

stdiolaunches a local child process and talks JSON-RPC over stdin/stdout. SSE/HTTP connects to a remote MCP server.streamable-httpis another HTTP streaming transport.For a local pandas finance engine, use

stdiomost of the time. That gives a separate OS process and separate Python environment without needing to operate a web server.But note the limits:

Separate process — runs as its own OS process (for stdio MCP), isolated from the main OpenClaw runtime.

Independent venv — uses its own Python environment if launched via tools like

uv,venv, orpoetry.Separate memory — maintains its own memory space, with no shared objects between Node (OpenClaw) and Python.

Shared system access — still operates under the same user, filesystem, and network by default.

No sandbox — does not provide OS-level isolation unless explicitly wrapped in Docker, a separate user, or another sandboxing mechanism.

Is MCP stateful or stateless?

Both, depending on layer. Each MCP tool call is RPC-like: request -> response

So the agent should treat tools as stateless unless the tool contract documents state. But the MCP server process can be long-lived. It can cache a DataFrame, keep a DuckDB connection open, reuse an HTTP session, or keep preloaded factor models. OpenClaw’s MCP docs describe saved definitions and runtime consumption, and the broader runtime behavior is that definitions are centrally stored for runtimes to launch/configure later rather than executed when mcp set is called. For deterministic finance, design tools as logically stateless but implementation-cached:

For deterministic finance workflows, tools should behave as stateless at the interface level, even if they use internal caching for performance. Explicit inputs — each tool call should include all required data (e.g., assumptions, tickers, time ranges) so the result is fully reproducible. Deterministic output — the same inputs should always produce the same output, ideally with an audit_id or metadata for traceability. Safe caching — the implementation may cache data (e.g., price history, fundamentals) internally to improve performance, but this must not affect correctness or reproducibility. Examples:

Good:

compute_dcf(cashflows, discount_rate, terminal_growth) -> result + audit_id

Also good:

query_facts(ticker, period) -> canonical facts + source metadata

Risky:

set_current_ticker("AAPL")

compute_dcf() // depends on hidden internal state

Hidden state — designs that rely on previously set internal variables make results harder to audit, reproduce, and reason about. Non-reproducibility — if inputs are implicit, the same call may yield different results depending on prior calls or process state. In practice: cache aggressively inside the tool implementation if needed, but require every external call to be self-contained and fully specified.

MCP scope, global registry, and per-agent restriction

openclaw mcp set finance_dcf ... writes a global server definition under mcp.servers. It is not scoped to a single agent at registration time. The docs say these commands manage OpenClaw-owned outbound MCP server definitions under mcp.servers, and that they do not connect to the target serv

er immediately. So the server definition is global. Scoping happens later through agent tool policy.

Typical restrictions:

{

agents: {

list: [

{

id: "dbot",

tools: {

allow: [

"finance_dcf__compute_dcf",

"finance_dcf__query_facts",

"finance_dcf__rank_metrics"

]

}

},

{

id: "support",

tools: {

deny: ["bundle-mcp"]

}

}

]

}

}

The exact config names can vary by OpenClaw version, so verify with:

openclaw agents list --bindings openclaw mcp list openclaw mcp show finance_dcf --json /tools /status

OpenClaw docs also explain that skill visibility and agent skill allowlists are separate: shared skills can be visible globally, but each agent can have its own effective skill list. The same architectural idea applies to tools: global availability does not mean every agent should be allowed to call every tool.

Name collisions

MCP servers can expose common names like search, query, status, or calculate. If every server exposed bare names directly, collisions would be inevitable. OpenClaw avoids this by surfacing MCP tools with server-qualified names, typically like:

<server_name>__<tool_name>

So if your server is finance_dcf and it exposes compute, the agent sees something like: finance_dcf__compute

This lets another server expose its own compute without ambiguity:

tax_model__compute risk_engine__compute finance_dcf__compute

For DBOT, pick explicit names anyway:

finance_dcf__compute_dcf finance_dcf__compute_wacc finance_dcf__query_facts finance_dcf__rank_metrics finance_dcf__audit_replay

Avoid vague tool names like calculate.

Security and isolation reality

Do not overstate OpenClaw isolation.

Default workspace separation is not a hard sandbox. The multi-agent docs explicitly say each agent’s workspace is the default current working directory, not a hard sandbox; relative paths resolve inside the workspace, but absolute paths can reach other host locations unless sandboxing is enabled.

For DBOT:

-MCP stdio process: -protects Gateway from Python crashes -separates Python dependencies from Node -enables structured tool protocol -does not stop filesystem/network access by itself -Docker/OS sandbox: -required for stronger filesystem/network/user isolation -Tool allowlists: -required to prevent unrelated agents from calling finance tools -Skill allowlists: -required to prevent unrelated agents from inheriting finance behavior

Use least privilege: - one MCP server per capability boundary - read-only DB credentials for fact retrieval - no shell access inside finance MCP unless needed - narrow tool allowlists per agent - explicit source policies - audit every deterministic calculation

Conclusion

OpenClaw separates orchestration from execution: agents plan and coordinate, while tools perform deterministic work. For reliable financial analysis, the correct pattern is to expose computation through typed tools (preferably via MCP) and enforce their use through skills and tool policy. Sub-agents add structured delegation and parallelism but do not replace deterministic execution. A robust setup combines clear agent instructions, strict tool boundaries, and reproducible, stateless tool interfaces.

Comments

Post a Comment