Building the Damodaran Bot with OpenClaw (Part 1): From Concept to Architecture

Introduction

In the previous article, we explored how to build a financial agent using OpenClaw as a foundation for real-world automation. That implementation demonstrated an important principle: modern AI systems become useful not when they generate text, but when they interact with structured tools and data pipelines.

This article extends that idea toward a more ambitious goal:

Building a Damodaran-style valuation bot (DBOT) - a system that performs structured equity valuation using established financial methodologies.

The objective is not to invent a new valuation framework. Instead, the goal is to:

reuse well-known financial models (DCF, comparables, sensitivity analysis)

integrate them with LLM-based reasoning

orchestrate everything through a multi-agent system

use OpenClaw as the execution and interaction layer

This first part focuses on system design and conceptual architecture. Later parts would move into implementation details.

Why Build a Damodaran Bot?

Equity valuation is often presented as a formula-driven exercise, but in practice it unfolds as a sequence of interconnected steps. It starts with gathering financial data, but quickly moves beyond that - understanding how the business actually operates, forming a view on where it can grow, and identifying the risks that could disrupt that path. That qualitative perspective is then translated into numbers: assumptions about revenue growth, margins, reinvestment, and cost of capital. From there, a valuation model - most commonly a discounted cash flow (DCF) - is applied, followed by stress-testing those assumptions and comparing the result to current market pricing.

Some parts of this process are straightforward and repeatable. Pulling financial data, normalizing it, and running calculations can be handled deterministically. Other parts are less rigid. Interpreting a company’s strategy, assessing competitive dynamics, or deciding whether growth assumptions are justified requires judgment. The process is therefore split between what can be automated reliably and what still depends on structured reasoning.

LLM-based systems can contribute to both sides, but only if the workflow is made explicit. Treating valuation as a single prompt leads to shallow outputs because it compresses multiple reasoning steps into one opaque operation.

The idea behind a Damodaran Bot is to do the opposite: break valuation into clearly defined stages and coordinate them. Instead of asking for an answer, the system reconstructs the process - step by step - so that each part, from data collection to narrative to modeling, is handled in a controlled and transparent way.

Design Principles

Before defining components, establish constraints:

1. Do not let the LLM compute financial models

All calculations must be:

deterministic

testable

reproducible

2. Separate reasoning from execution

LLM → reasoning, interpretation, narrative

Code → computation, validation, storage

3. Use modular agents, not a monolith

Each component should:

have a clear responsibility

produce structured outputs

be independently testable

4. Keep the workflow explicit

Avoid hidden reasoning. Every step should be:

logged

auditable

reproducible

These principles should be treated as non-negotiable engineering constraints rather than optional guidelines. Each rule exists to prevent a specific failure mode: incorrect calculations, opaque reasoning, tightly coupled components, or irreproducible outputs. Violating them may still produce results, but those results become difficult to verify, debug, or trust. In practice, this means enforcing these rules at the system level - through architecture, validation layers, and strict separation of responsibilities - rather than relying on discipline during development.

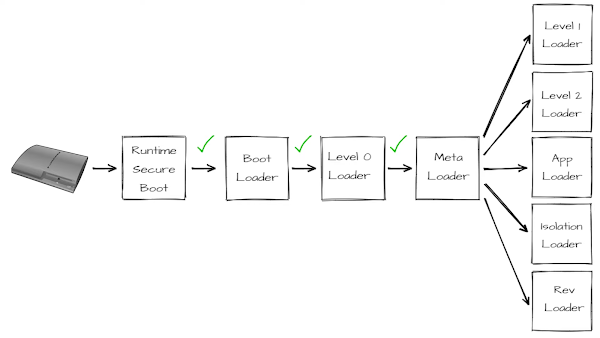

High-Level Architecture

Once the request enters the system, the Supervisor Agent takes over. Its role is to interpret the task and coordinate the sequence of actions required to complete it. Rather than relying on a single reasoning step, the Supervisor delegates work to a set of specialized agents, each responsible for a specific part of the valuation process. These agents operate in parallel or sequence depending on the task, contributing structured outputs that build toward a complete valuation.

The outputs from these agents do not directly produce a final answer. Instead, they feed into a deterministic valuation engine implemented in Python. This engine performs all financial calculations in a controlled and reproducible way, ensuring that the numerical results are consistent and testable. The final step combines these results with the reasoning artifacts to produce a report, while also storing the full state of the process for future updates, auditing, or comparison.

This system can be understood as three distinct but connected layers:

Interaction layer → OpenClaw

Reasoning layer → agents

Execution layer → valuation engine

Role of OpenClaw

Before assigning responsibilities, it is important to position OpenClaw correctly within the system. It does not participate in valuation logic, assumption building, or financial modeling. Instead, it acts as the layer that connects user intent with the internal workflow, ensuring that requests are translated into structured tasks and that results are returned in a controlled manner. This separation prevents the interface layer from becoming entangled with domain logic and keeps the overall architecture maintainable.

OpenClaw is not the valuation system itself. It is the operating environment.

Responsibilities

handle user requests

route tasks to the system

execute tools (APIs, scripts)

manage sessions

support human-in-the-loop workflows

Example interactions

"Value NVIDIA"

"Update Tesla valuation with latest earnings"

"Run sensitivity on WACC from 8% to 12%"

"Compare my valuation with sector multiples"

OpenClaw receives these requests and passes them into the structured DBOT workflow.

The Supervisor Agent

The Supervisor is the central orchestrator.

It is responsible for:

understanding the task

deciding which agents to call

tracking state

enforcing rules

Why a Supervisor is needed

Without a Supervisor, the system collapses into loosely connected tools with no coherent execution logic. Individual agents can perform their tasks, but there is no guarantee they will be called in the right order, with the right inputs, or that their outputs will be consistent with each other. In valuation, this leads to fragmented reasoning - for example, assumptions generated without context, or a DCF run on incomplete data.

A single LLM prompt cannot reliably coordinate a multi-step workflow involving data retrieval, interpretation, modeling, and validation. The Supervisor exists to turn this into a controlled process, where each step is explicitly triggered, verified, and connected to the next. It effectively replaces implicit reasoning with an explicit execution plan.

What the Supervisor actually does

The Supervisor is not just a router - it is a stateful decision-maker. It maintains a global view of the valuation process and continuously evaluates what is known, what is missing, and what should happen next.

Its role includes:

converting user intent into a structured workflow

coordinating multiple agents with different responsibilities

ensuring that outputs from one step are valid inputs for the next

revisiting earlier steps when new information invalidates previous assumptions

determining when the system has reached a sufficiently complete and consistent state to produce a final result

In practice, it behaves more like a workflow engine with reasoning capabilities than a simple controller.

Key responsibilities

1. Task decomposition

Example:

Input: "Value NVIDIA"

Decomposed into:

- fetch financials

- analyze business

- generate assumptions

- run DCF

- run sensitivity

- generate report

The Supervisor translates high-level requests into a sequence of executable steps. This decomposition is not static - it can change depending on data availability, company complexity, or intermediate results.

2. Workflow control

The Supervisor decides:

order of operations

when to re-run steps

when to stop

This includes handling non-linear flows. For example, if the Critic Agent flags unrealistic margins, the Supervisor may send the process back to the Valuation Agent to revise assumptions before proceeding. This iterative control loop is essential for maintaining internal consistency.

3. State tracking

State includes:

{

"ticker": "NVDA",

"financials": {...},

"assumptions": {...},

"valuation": {...},

"sources": [...],

"open_questions": [...]

}

The Supervisor maintains this state as a single source of truth. Every agent reads from and writes to this structure, ensuring that the system remains synchronized. It also allows the process to be resumed, audited, or compared across different runs.

4. Guardrails

no missing assumptions

no unsupported numbers

no stale data

no LLM-generated math

These constraints are enforced centrally by the Supervisor. Rather than trusting each agent to behave correctly, the Supervisor validates outputs before allowing the workflow to proceed. This prevents silent failures and ensures that the final valuation is based on complete and defensible inputs.

Supervisor vs "The Prompt"

The Supervisor introduces structure and control, but it does not remove uncertainty or guarantee correct outcomes. Its decisions - such as how to decompose tasks or which agents to invoke - are still influenced by probabilistic reasoning, which means the workflow itself can be imperfect. In practice, this leads to several limitations:

it may choose a suboptimal sequence of steps

it can overlook missing or implicit context

it cannot fully validate qualitative judgments (e.g., whether a growth narrative is realistic)

it inherits errors from underlying agents and data sources

There is also a trade-off in how much logic is embedded in the Supervisor. If the control logic is too rigid, the system becomes brittle and fails in edge cases that do not match predefined flows. If it is too flexible, the system becomes less predictable and harder to debug. Designing the Supervisor is therefore an exercise in balancing control vs adaptability, not eliminating uncertainty.

Why not just use “skills” and let the LLM orchestrate?

A common alternative is to define each agent as a “tool” or “skill” and rely on a model (e.g., Claude or OpenAI function calling) to decide when to use them. At first glance, this seems simpler: expose Data, News, Valuation, and other agents as callable tools, and let the model dynamically orchestrate them based on a prompt.

This approach works for lightweight workflows, but breaks down in structured domains like valuation for several reasons:

1. Lack of persistent state

Tool-based orchestration is typically stateless or weakly stateful. The model decides what to call next based on the current prompt, not on a rigorously maintained global state. In valuation, where assumptions, intermediate outputs, and dependencies must remain consistent across steps, this leads to drift and inconsistencies.

2. Unreliable execution paths

When orchestration is delegated entirely to the LLM, the sequence of steps becomes non-deterministic. The same request may trigger different tool chains across runs. For valuation, this is problematic because:

workflows must be reproducible

missing steps (e.g., skipping sensitivity analysis) are not acceptable

ordering matters (e.g., assumptions before DCF)

3. Weak enforcement of constraints

A prompt can suggest rules (“always run DCF after assumptions”), but it cannot enforce them reliably. The model may ignore, reinterpret, or partially follow instructions. A dedicated Supervisor, by contrast, enforces constraints programmatically - blocking progression if required steps or inputs are missing.

4. Limited ability to handle iteration

Valuation is inherently iterative. If a Critic flags an issue, the system must revisit earlier steps in a controlled way. Tool-based orchestration struggles with this because it lacks an explicit notion of workflow loops, checkpoints, or rollback. The Supervisor, however, can explicitly re-route execution and maintain consistency across iterations.

5. Debugging and auditability

When orchestration is embedded in prompts, it becomes difficult to trace why a specific sequence of actions was taken. A Supervisor provides:

explicit decision points

structured logs

reproducible execution paths

This is essential for debugging and for any use case where outputs need to be audited.

Supervisor vs OpenClaw itself

It is important to distinguish the Supervisor from OpenClaw.

OpenClaw provides:

the interface

tool execution environment

session and interaction handling

The Supervisor provides:

domain-specific orchestration

valuation logic coordination

state management

rule enforcement

If OpenClaw were used as the Supervisor, domain logic would be embedded into the infrastructure layer. This creates tight coupling between how the system runs and what the system does, making it harder to evolve either independently.

Keeping the Supervisor separate ensures that:

valuation logic remains explicit and testable

workflows can be versioned and improved without changing the runtime

the system can be ported to different execution environments if needed

Core Agents

Each agent has a narrow, well-defined role.

1. Data Agent

Purpose: The Data Agent is responsible for retrieving, cleaning, and standardizing financial data from multiple sources such as APIs, filings, and market feeds. It ensures consistency across accounting formats and time periods so that downstream components can rely on a single, coherent dataset. It also detects anomalies or missing values and flags them for further review rather than silently passing incorrect data.

ticker

revenue history

margins

capital structure

cash, debt

shares outstanding

query APIs

normalize accounting formats

detect anomalies

2. Filings Agent

Purpose: The Filings Agent processes structured and unstructured regulatory documents (e.g., 10-K, 10-Q) to extract information relevant to valuation. Instead of generic summarization, it identifies business segments, revenue drivers, cost structures, and risk disclosures that directly impact assumptions. Its output bridges raw textual disclosures and structured reasoning inputs for the valuation process.

10-K / 10-Q documents

business segments

revenue drivers

risks

geographic exposure

This agent does not summarize blindly. It extracts valuation-relevant information.

3. Conference / Earnings Call Agent

Purpose: This agent analyzes earnings-call transcripts, Q&A sessions, investor-day presentations, and webcast recordings to extract valuation-relevant signals. Its job is not to summarize the call generally, but to identify changes in management tone, guidance, demand outlook, margin pressure, capital allocation, and risk disclosure. It should convert qualitative commentary into structured inputs that can influence the valuation model.

Inputs

Prepared remarks - strategy, reported performance, guidance, segment trends

Q&A session - analyst pressure points, evasive answers, changes in tone

Webcast/audio - emphasis, hesitation, confidence, repeated themes

Investor presentations - long-term targets, TAM claims, margin goals

Guidance changes - revenue, margins, capex, buybacks, debt, restructuring

Outputs

A good output would look like:

{

"growth_signal": "positive",

"margin_signal": "mixed",

"risk_signal": "higher",

"capital_allocation_signal": "neutral",

"key_claims": [

"Management raised full-year revenue guidance",

"Analysts questioned sustainability of gross margin",

"Capex intensity expected to remain elevated"

],

"valuation_implications": {

"revenue_growth": "increase near-term forecast",

"operating_margin": "do not raise terminal margin yet",

"sales_to_capital": "lower efficiency due to higher capex"

}

}4. News Agent

Purpose: The News Agent filters large volumes of news and event data to isolate items that materially affect valuation assumptions. It classifies each event by its likely impact on growth, margins, reinvestment, or risk, rather than just summarizing content. This ensures that only economically meaningful information influences the model, reducing noise from irrelevant headlines.

Tasks

filter news by relevance

classify impact:

growth

margin

risk

capital intensity

Output example

{

"event": "New AI chip release",

"impact": "positive_growth",

"confidence": 0.7

}

5. Comparable Company Agent

Purpose: The Comparable Company Agent identifies a relevant peer group and computes market-based valuation multiples for benchmarking. It provides context for whether the assumptions and outputs of the DCF model are reasonable relative to the market. Its role is diagnostic rather than primary, helping detect inconsistencies or outliers in the valuation.

identify peers

compute multiples:

EV/Sales

EV/EBITDA

P/E

Not to replace DCF, but to:

validate assumptions

detect outliers

6. Valuation Agent

Purpose: The Valuation Agent translates qualitative insights and contextual information into structured numerical assumptions required by the valuation engine. It aligns the narrative about the business with quantifiable drivers such as revenue growth, margins, and capital efficiency. Its output serves as the formal interface between reasoning components and deterministic financial computation.

Important

This agent does not compute directly. It prepares structured inputs for the valuation engine.

Outputs

{

"revenue_growth": [...],

"target_margin": 0.35,

"wacc": 0.09,

"terminal_growth": 0.03,

"sales_to_capital": 2.5

}

7. Sensitivity Agent

Purpose: The Sensitivity Agent evaluates how changes in key assumptions affect the valuation outcome. It systematically varies inputs like growth rates, margins, and discount rates to generate a range of possible values. This helps quantify uncertainty and identify which assumptions have the greatest influence on the final result.

Tasks

vary key inputs

compute valuation ranges

identify most sensitive variables

Output

valuation matrix

sensitivity ranking

8. Critic Agent

Purpose: The Critic Agent performs validation and quality control across the entire workflow. It checks for logical inconsistencies, unsupported assumptions, missing data, and discrepancies between narrative and numerical outputs. Its goal is to prevent flawed or unjustified valuations from reaching the final report.

Checks

missing inputs

inconsistent narrative

unsupported assumptions

unrealistic outputs

Example

"Revenue growth exceeds industry growth without justification"

"WACC inconsistent with country risk"

9. Writer Agent

Purpose: The Writer Agent converts structured outputs into a coherent, human-readable valuation report. It integrates business description, assumptions, valuation results, and risks into a logically organized narrative. The focus is on clarity and traceability, ensuring that each conclusion can be linked back to underlying data and reasoning.

Structure

business overview

valuation narrative

assumptions

valuation result

risks

conclusion

The Valuation Engine (Deterministic Core)

This is the most critical component.

Why deterministic?

ensures correctness

allows backtesting

prevents hallucination

Core pipeline

Revenue forecast

→ EBIT forecast

→ NOPAT

→ Reinvestment

→ Free Cash Flow

→ Discounting

→ Terminal value

→ Equity value

→ Value per share

Implementation

Python modules

strict input validation

unit tests

Data Flow Example

Let’s walk through a full execution to see how the system moves from a simple user request to a structured valuation output. Each step builds on the previous one, gradually transforming raw data and qualitative inputs into quantified assumptions and, ultimately, an intrinsic value estimate. This sequence also highlights how different agents contribute specialized inputs while remaining coordinated through a single controlled workflow.

Step 1 — User request

"Value NVIDIA"

Step 2 — OpenClaw

receives request

triggers DBOT workflow

Step 3 — Supervisor

initializes state

calls Data Agent

Step 4 — Data Agent

retrieves financials

stores normalized data

Step 5 — Filings Agent

extracts business model

Step 6 — News Agent

identifies recent developments

Step 7 — Conference Agent

analyzes earnings call transcript, Q&A, and webcast

extracts management guidance, tone, and key analyst concerns

translates qualitative signals into structured valuation implications

Step 8 — Valuation Agent

generates assumptions based on data, filings, news, and conference signals

Step 9 — Valuation Engine

computes intrinsic value

Step 10 — Sensitivity Agent

generates scenarios

Step 11 — Critic Agent

validates results

Step 12 — Writer Agent

generates report

Step 13 — OpenClaw

returns result to user

Why This Architecture Works (and What It Is Not)

This architecture is designed to behave like a system, not a prompt. Its strength comes from modularity: each agent has a clearly defined responsibility and can be improved, replaced, or extended without breaking the rest of the workflow. This makes the system maintainable over time, especially as individual components - data sources, models, or reasoning strategies - evolve.

At the same time, the entire process is transparent. Every step is explicitly executed, logged, and traceable, which means the path from input to output can be inspected and audited. This is critical in valuation, where understanding how a number was produced is often more important than the number itself.

Reproducibility is another core property. Given the same inputs and assumptions, the system will produce the same outputs, because all numerical computation is handled deterministically. This allows for consistent comparisons across time, systematic backtesting, and debugging when results look incorrect.

The design is also extensible. New capabilities can be introduced as additional agents - such as macro analysis, options valuation, or credit modeling - without requiring a redesign of the core system. These components can plug into the existing workflow and contribute additional signals or validation layers.

Equally important is defining what this system is not. It is not a chatbot that guesses stock prices, nor a black-box machine learning model that produces outputs without explanation. It is not an end-to-end neural system where reasoning and computation are entangled and opaque. Instead, it is a structured architecture that combines deterministic financial models with probabilistic reasoning components, keeping both roles clearly separated and controlled.

Conclusion

Building a Damodaran-style valuation bot is not about inventing a new model or improving the mathematics of valuation. The real challenge lies in structuring the process itself - breaking it into clear steps, separating interpretation from computation, and coordinating multiple components so they work together reliably.

In this setup, OpenClaw acts as the execution layer, handling interaction and tool orchestration. The DBOT architecture defines how reasoning is structured and how different agents contribute to the overall process. The valuation engine anchors everything with deterministic, testable numerical outputs. When combined, these pieces form a system that is practical to use, straightforward to validate, and flexible enough to evolve over time - all while staying grounded in established financial methodology.

From here, the focus shifts to implementation. In the next articles, each component will be developed in detail, starting with the deterministic valuation core and then moving through the individual agents that make up the system.

.png)

Comments

Post a Comment